AI Is Finding Critical Vulnerabilities Faster Than Teams Can Fix Them

Security testing hasn’t kept pace with how software is built or how it’s attacked. While development cycles have accelerated and AI-powered attackers can probe systems continuously, most security practices still rely on periodic assessments and slow remediation. The result is a widening gap between when vulnerabilities are introduced and when they’re discovered or fixed. In our recent analysis of autonomous AI-driven pentests across production systems, that gap becomes impossible to ignore: modern applications aren’t just vulnerable, they’re continuously vulnerable.

Guest post by Huzaifa Ahmad, Founder, Hex Security

Over the past 90 days, we've run autonomous pentests against various production systems — and the results are hard to ignore.

Modern applications aren’t just vulnerable. They’re continuously vulnerable.

The problem isn’t that teams don’t test for security. It’s that attackers are now operating at machine speed, while testing and remediation still happen on human timelines.

As AI accelerates vulnerability discovery, the window between a bug being introduced and exploited is shrinking. If testing and fixes don’t move at the same speed, that gap becomes the attack surface.

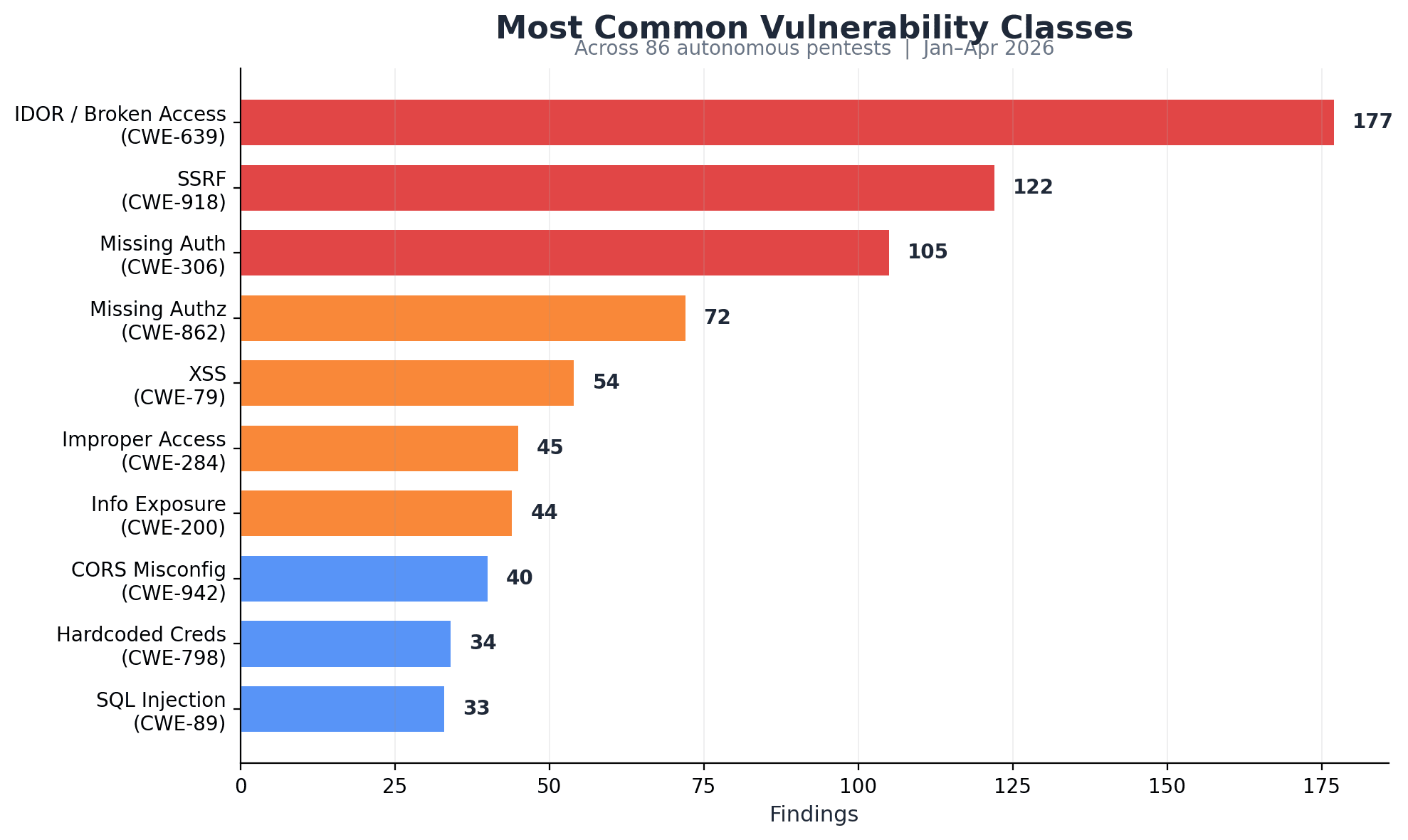

What we’re seeing in the wild

Across 28 companies, our agents identified:

~2000 total vulnerabilities

44.6% critical or high severity (829 findings)

21.6 vulnerabilities per scan on average (median: 15)

65.1% of scans uncovered at least one critical issue

Peak: 88 vulnerabilities in a single scan

These aren’t theoretical risks. Every finding is validated with a working proof-of-concept exploit.

Nearly half of all vulnerabilities we found are serious, and most systems have at least one critical issue.

What’s more concerning is the type of vulnerabilities behind these numbers. They’re not simple misconfigurations or outdated dependencies. They’re deeper issues in how applications are designed and behave.

These aren’t simple bugs

The most common issues we’re seeing are logic flaws.

A logic flaw means the system is behaving exactly as coded, but the design itself is insecure.

These aren’t configuration mistakes or outdated libraries. They’re bugs in how the application actually works, how data flows, how permissions are enforced, and how systems trust each other.

Here’s what that looks like in practice:

IDOR (Insecure Direct Object Reference) — 177 findings

Attackers can access or modify other users’ data simply by changing identifiers (e.g. user IDs, file IDs). This can expose sensitive data at scale or even enable account takeover.SSRF (Server-Side Request Forgery) — 122 findings

Attackers can trick the server into making requests on their behalf, often to internal systems. This can expose internal APIs, cloud credentials, or infrastructure that should never be publicly accessible.Missing authentication — 105 findings

Sensitive endpoints or actions are accessible without logging in. This means anyone on the internet can trigger critical functionality or access protected data.

These vulnerabilities are particularly dangerous because they exploit how the application behaves, not just how it’s configured.

Traditional scanners struggle here because they don’t understand behavior. They look for signatures, not logic.

Why AI is finding more

Up until recently, finding and exploiting these kinds of vulnerabilities required time, skill, and manual effort.

That has changed.

AI agents can now interact with applications the way a human attacker would, but at a completely different scale and speed.

They don’t just scan endpoints, they explore them.

They test edge cases

Chain multiple steps together

Iterate quickly across thousands of possibilities

Instead of checking for known issues, they actively probe how an application behaves and look for ways to break it. They never sleep. Their workday never ends. They have the world's knowledge base memorized.

This shift is what’s driving the results we’re seeing, consistent discovery of critical issues across the majority of scans.

Why Open Source Can Accelerates Vulnerability Discovery

Open source changes how vulnerabilities are discovered.

Imagine picking a lock. When you know the inner workings of the lock, it’s a lot easier to pick it.

When AI systems have access to source code, they can analyze every code path directly instead of inferring behavior from the outside.

In controlled benchmark testing using the publicly available XBow validation suite, access to source code increased vulnerability detection by approximately 20% compared to black-box testing.

We see this gap in real-word scenarios, too.

With full visibility, AI agents can:

Trace data flows end-to-end

Identify broken assumptions in business logic

Explore edge cases much more efficiently

This makes logic flaws significantly easier to find and exploit.

As more applications are built in the open, and as AI systems improve, the speed of vulnerability discovery continues to increase.

That said, being closed source doesn’t make you safe by default.

Security through obscurity has never been a real defense. A determined attacker with enough compute and enough tokens to burn can still enumerate, fuzz, and eventually uncover exploitable paths, even without direct access to the code.

Which means the takeaway isn’t about choosing open or closed source. It’s about adapting how you test and secure systems.

Regardless of architecture, traditional point-in-time assessments miss the mark. Codebases are evolving at machine speed, and so are the attack surfaces.

The real problem: greater speed = lower cost

A few years ago, finding and exploiting vulnerabilities required time, skill, and patience.

Today, that timeline has collapsed.

AI systems can:

Run thousands of tests in parallel

Continuously explore new code paths

Chain exploits together automatically

This means vulnerabilities are being discovered faster than ever before, often within hours or days of being introduced.

But most organizations haven’t adapted to this shift.

Security testing is still treated as a periodic event:

Once a year

Maybe once a quarter

And remediation often moves even slower, waiting on prioritization, engineering bandwidth, and release cycles. Even the time it takes to push code to production creates gaps that faster systems can exploit.

This creates a widening gap:

Vulnerabilities are discovered at machine speed

Fixes are deployed on human timelines

That gap is the real attack surface.

If a critical vulnerability exists for days or weeks before it’s fixed, it doesn’t matter how it was found. It’s exploitable.

The traditional model of “test, report, fix later” simply doesn’t hold up when both attackers and discovery systems are operating continuously.

That model is already outdated.

What actually works

The shift isn’t about tools. It’s about approach.

Security needs to move from:

Point-in-time testing → continuous testing

In practice, this isn’t just a tooling change.

It requires rethinking how security fits into the development lifecycle.

Continuous testing typically combines:

Automated systems that run tests continuously, not just once a year

Security integrated directly into CI/CD pipelines

Developers owning and fixing issues as part of normal workflows

Periodic human testing for deeper, more complex attack paths

This is not something most teams can solve with headcount alone.

Manual testing doesn’t scale to match the speed of modern development or modern attackers.

That’s why we’re seeing a shift toward automation and, in many cases, outsourcing continuous testing to systems that can operate at machine speed.

The urgency here is real.

What next?

AI is already accelerating vulnerability discovery. Attackers are not waiting for your next scheduled pentest.

If your application is changing daily, your security testing and your ability to fix issues need to keep up.

Regardless of architecture, traditional point-in-time assessments miss the mark. Codebases are evolving at machine speed, and so are the attack surfaces.

Open source systems need to acknowledge and own the additional exposure that comes with code visibility. Closed source systems, meanwhile, cannot rely on obscurity and will need to improve their security posture as well.

The gap between how fast we build software and how often we test it is widening.

AI is accelerating both sides, but right now, attackers have the advantage.

If you aren't actively scaling security to match the threat, your systems will fail.

The benchmark referenced above (XBow) is publicly available on GitHub. Our results combine controlled benchmark testing with real-world data from production systems across our platform.

Inizia subito gratuitamente con Cal.com!

Sperimenta una programmazione e produttività senza interruzioni senza spese nascoste. Iscriviti in pochi secondi e inizia a semplificare la tua programmazione oggi, senza bisogno di carta di credito!